Purpose-Built AI: from Theory to Practice

by Isaac Greszes, Eleos

Purpose-Built AI

From Theory to Practice

This 4-part series has outlined how to evaluate, test, and use AI solutions, emphasizing outcome relevance, workflow fit in regulated environments, architectural scalability, and governance discipline. That framework was intentionally rigorous. In a market crowded with pilots and proofs of concept, it reflects the reality that AI outcomes are not accidental; they are the result of deliberate design choices.

This final chapter shares a real-life story of AI implementation using the Polaris AI Engine.

A Reference Implementation

One example of how these principles are applied in practice is Eleos’ Polaris AI engine.

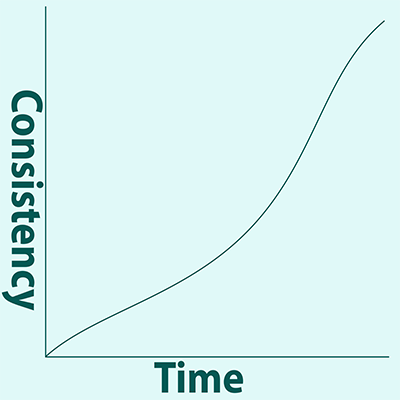

Polaris was developed over more than five years to support regulated conversational care. Rather than relying solely on general-purpose language models, it combines commercial-grade multimodal infrastructure with proprietary clinical intelligence layers that encode documentation logic, reasoning patterns, and safety heuristics.

Key elements of this approach include:

- Layered architecture, separating foundational AI capabilities from clinical logic and governance controls.

- Expert-led refinement, with licensed clinicians continuously validating and updating clinical rules.

- Application-layer tuning, allowing the system to improve without retraining on customer data.

- Governance-by-design, with explicit boundaries around data use, monitoring, and risk management.

Clinical Control

Importantly, Polaris is not positioned as a fully autonomous system. Clinicians remain in control, using AI as a collaborative tool that reduces administrative burden while preserving clinical judgment.

This design reflects a broader principle: in regulated care environments, trust and adoption depend as much on restraint and transparency as on technical capability.

Your content goes here. Edit or remove this text inline or in the module Content settings. You can also style every aspect of this content in the module Design settings and even apply custom CSS to this text in the module Advanced settings.

Applicability in Care at Home

At the same time, platforms built to handle high-variability conversational care share structural advantages when entering care at home environments; they:

- Are designed to operate in unstructured, field-based settings.

- Encode clinical reasoning rather than relying on generic text generation.

- Incorporate governance and safety controls suited to regulated care.

For executives navigating pilot fatigue, this distinction matters. Platforms designed as infrastructure — rather than experiments — are better positioned to adapt responsibly as care at home AI adoption matures.

Final Thoughts

AI is here and it’s here to stay. Care at home agencies need to look to AI solutions in order to stay competitive. Knowing which solutions to review, what to look for, and how to move beyond the pilot phase begin with finding Purpose-Built Ai. Many thanks to our friends at Eleos for their expertise on this topic. Read the 4-part series.

# # #

About Eleos

At Eleos, we believe the path to better healthcare is paved with provider-focused technology. Our purpose-built AI platform streamlines documentation, simplifies compliance and surfaces deep care insights to drive better client outcomes. Created using real-world care sessions and fine-tuned by our in-house clinical experts, our AI tools are scientifically proven to reduce documentation time by more than 70% and boost client engagement by 2x. With Eleos, providers are free to focus less on administrative tasks and more on what got them into this field in the first place: caring for their clients.

©2026 by The Rowan Report, Peoria, AZ. All rights reserved. This article originally appeared in The Rowan Report. One copy may be printed for personal use: further reproduction by permission only. editor@therowanreport.com